Jounce, Crackle and Pop

/I saw this T-shirt recently, and didn't get it. (The joke or the T-shirt.)

It turns out that the third derivative of displacement \(x\) with respect to time \(t\) — that is, the derivative of acceleration \(\mathbf{a}\) — is called 'jerk' (or sometimes, boringly, jolt, surge, or lurch) and is measured in units of m/s³.

So far, so hilarious, but is it useful? It turns out that it is. Since the force \(\mathbf{F}\) on a mass \(m\) is given by \(\mathbf{F} = m\mathbf{a}\), you can think of jerk as being equivalent to a change in force. The lurch you feel at the onset of a car's acceleration — that's jerk. The designers of transport systems and rollercoasters manage it daily.

$$ \mathrm{jerk,}\ \mathbf{j} = \frac{\mathrm{d}^3 x}{\mathrm{d}t^3}$$

Here's a visualization of velocity (green line) of a Tesla Model S driving in a parking lot. The coloured stripes show the acceleration (upper plot) and the jerk (lower plot). Notice that the peaks in jerk correspond to changes in acceleration.

The snap you feel at the start of the lurch? That's jounce — the fourth derivative of displacement and the derivative of jerk. Eager et al (2016) wrote up a nice analysis of these quantities for the examples of a trampolinist and roller coaster passenger. Jounce is sometimes called snap... and the next two derivatives are called crackle and pop.

What about momentum?

If the momentum \(\mathrm{p}\) of a mass \(m\) moving at a velocity \(v\) is \(m\mathbf{v}\) and \(\mathbf{F} = m\mathbf{a}\), what is mass times jerk? According to the physicist Philip Gibbs, who investigated the matter in 1996, it's called yank:

“Momentum equals mass times velocity.

Force equals mass times acceleration.

Yank equals mass times jerk.

Tug equals mass times snap.

Snatch equals mass times crackle.

Shake equals mass times pop.”

There are jokes in there, help yourself.

What about integrating?

Clearly the integral of jerk is acceleration, and that of acceleration is velocity, the integral of which is displacement. But what is the integral of displacement with respect to time? It's called absement, and it's a pretty peculiar quantity to think about. In the same way that an object with linearly increasing displacement has constant velocity and zero acceleration, an object with linearly increasing absement has constant displacement and zero velocity. (Constant absement at zero displacement gives rise to the name 'absement': an absence of displacement.)

Integrating displacement over time might be useful: the area under the displacement curve for a throttle lever could conceivably be proportional to fuel consumption for example. So absement seems to be a potentially useful quantity, measured in metre-seconds.

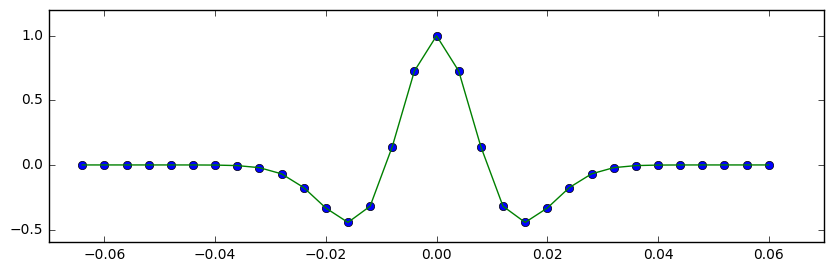

Integrate absement and you get absity (a play on 'velocity'). Keep going and you get abseleration, abserk, and absounce. Are these useful quantities? I don't think so. A quick look at them all — for the same Tesla S dataset I used before — shows that the loss of detail from multiple cumulative summations makes for rather uninformative transformations:

You can reproduce the figures in this article with the Jupyter Notebook Jerk_jounce_etc.ipynb. Or you can launch a Binder right here in your browser and play with it there, without installing a thing!

References

David Eager et al (2016). Beyond velocity and acceleration: jerk, snap and higher derivatives. Eur. J. Phys. 37 065008. DOI: 10.1088/0143-0807/37/6/065008

Amarashiki (2012). Derivatives of position. The Spectrum of Riemannium blog, retrieved on 4 Mar 2018.

The dataset is from Jerry Jongerius's blog post, The Tesla (Elon Musk) and

New York Times (John Broder) Feud. I have no interest in the 'feud', I just wanted a dataset.

The T-shirt is from Chummy Tees; the image is their copyright and used here under Fair Use terms.

The vintage Snap, Crackle and Pop logo is copyright of Kellogg's and used here under Fair Use terms.

Except where noted, this content is licensed

Except where noted, this content is licensed