What is an Ormsby wavelet anyway?

/If you dabble in reflection seismic analysis, you probably know the Ricker wavelet. We’ve visited it a few times on this blog — Evan once showed how to make and plot one, I looked at some analytic properties of it, and we even played golf with it.

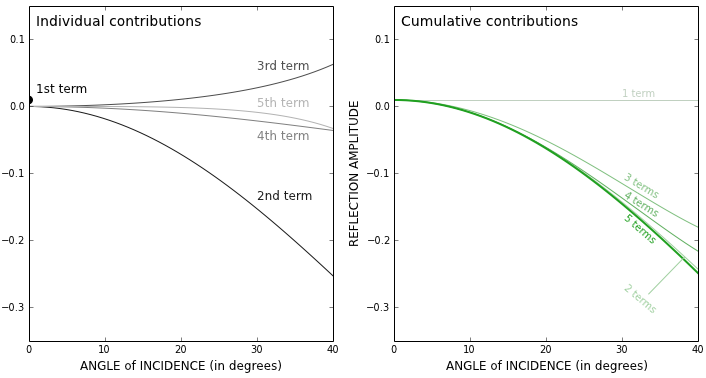

The Ricker is everywhere, but it has an important limitation — bandwidth. Its shape in the frequency domain is roughly Gaussian (below, left), which is the reason it only really has one parameter: the central frequency. This kind of spectrum is sometimes okay for older seismic surveys, especially marine data, because it matches the bandwidth reasonably. But for modern surveys, or even old land data, we often want a broader set of frequencies — more of a trapezoidal spectral shape. We need the Ormsby wavelet:

How to make an Ormsby wavelet

The earliest reference I can find to the Ormsby wavelet is in an article by Harold Ryan entitled, Ricker, Ormsby, Klauder, Butterworth — a choice of wavelets, in the September 1994 issue of the CSEG Recorder. It’s not clear at all who Ormsby was, other than “an aeronautical engineer”. And I don’t think anyone outside exploration geophysics knows what an Ormsby is, they just call it a ‘bandpass filter’.

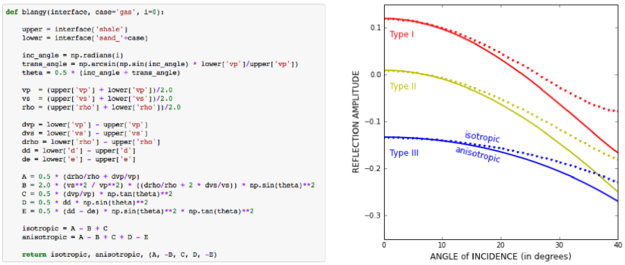

Ryan helpfully provided both a time-domain analytic expression — which turns out to have four typos use the classical definiton of the sinc function — and a plot:

The equation in Ryan, and my modified Figure 3 (right). the result of the equation is in red.

This equation does not produce the wavelet (black) in the plot, however, it produces the one I’ve added in red. If you find this surprising, you shouldn’t — in my experience, it’s very common for the words and/or maths in a paper not to match its figures. [Edit: as noted above, in this case it’s because of how NumPy’s sinc function is defined; see the comment from Robert Kern, below.] We established this at the SEG Repro Zoo in 2018. If an author is not required to produce code or data, it’s not very surprising; and even if they do, the peer review system is not set up for referees to do this kind of check — apart from anything else, it’s far too onerous. But I digress.

After some fiddling around, I realized that the expression being passed to NumPy’s sinc function should be \(ft\), not \(\pi ft\). This produces a result that matches the figure almost exactly (and, counting wiggles, has the right frequency). So here’s the result of that new expression, shown in green here with the original figure (black) and the same red wavelet as above:

This green thing is the wavelet implemented in bruges so it’s easy to produce it; the arguments are the duration (0.4 seconds), the sample interval dt (4 ms) and the corner frequencies f (5, 10, 40, and 45 Hz respectively):

bruges.filters.ormsby(duration=0.4, dt=0.004, f=[5, 10, 40, 45])

What about other examples from the literature?

Good question! Apart from my own Python code in bruges, I did find one or two other implementations:

A student project from Montclair State University. It produces a similar wavelet, but it’s not quite the same as

bruges.seismic.jl, the preeminent seismic package for Julia. This matchesbrugesbut then applies a Hamming window.GPIAG/SOFI2Dsoftware from the Karlsruhe Institute of Technology. This MATLAB/Octave code matchesbruges.Miong et al. (2007) does not give an implementation, only a plot. I digitized it but can’t quite match it. Have a go!

So it seems from this tiny experiment that only one of the implementations I found matched the figure in the Ryan article perfectly. The other wavelets are variations on the theme. Which is probably fine — after all, they are all only models for real seismic impulses — but in the interests of scientific reproducibility, I think it underscores the importance of transparent methodology and publishing your code.

Update on 9 Feb: A conversation in Software Underground revealed that Petrel’s version of the Ormsby wavelet matches the bruges implementation — but with a triangular window multiplied in (similar to how a Hamming window is multiplied into the seismic.jl version.

I pushed my Python Jupyter Notebook to the new repro-zoo repository on GitHub. Please feel free to fork this project and add your own attempted reproductions of computational geoscience papers.

The original repro-zoo repo from the 2018 event is on SEG’s GitHub.

References

Ryan, H (1994). Ricker, Ormsby, Klauder, Butterworth — a choice of wavelets. CSEG Recorder 19 (7). Available online.

Soo-Kyung Miong, Robert R. Stewart and Joe Wong (2007). Characterizing the near surface with VSP and well logs. CREWES Research Report 19. Available online.

Except where noted, this content is licensed

Except where noted, this content is licensed