x lines of Python: static basemaps with contextily

/Difficulty rating: Beginner

Something that is often useful in planning is to have a basemap of the area in which you have data or an interest. This can be made using a number of different tools, up to and including full-fledged GIS software, but we will use Contextily for a quick static basemap using Python. Installation is as simple as using conda install contextily or pip install contextily.

The steps that we want to take are the following, expressed in plain English, each of which will roughly be one line of code:

- Get a source for our basemap (placenames and similar things)

- Get a source for our geological map

- Get the location that we would like to map

- Plot the location with our geological data

- Add the basemap to our geological map

- Add the attribution for both maps

- Plot our final map

We will start with the imports, which as usual do not count:

import contextily as ctx import matplotlib.pyplot as plt

Contextily has a number of built-in providers of map tiles, which can be accessed using the ctx.providers dictionary. This is nested, with some providers offering multiple tiles. An example is the ctx.providers.OpenStreetMap.Mapnik provider, which contains the following:

{'url': 'https://{s}.tile.openstreetmap.org/{z}/{x}/{y}.png',

'max_zoom': 19,

'attribution': '(C) OpenStreetMap contributors',

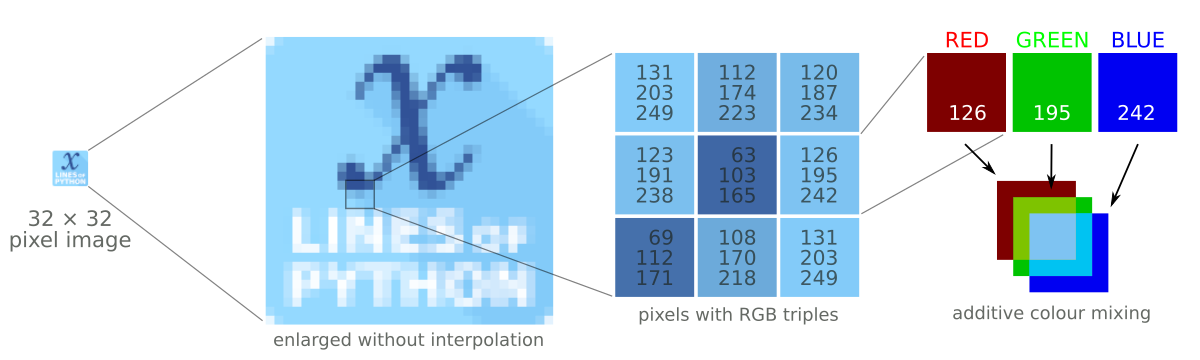

'name': 'OpenStreetMap.Mapnik'}The most important parameter in the dictionary for each provider is the url. These are of the form example.com/{z}/{x}/{y}.png. The {z} is the zoom level, while {x} and {y} relate to the latitude and longitude of a given tile, respectively. Note that these are the same as those used by interactive Slippy maps; contextily just downloads them as a single static image.

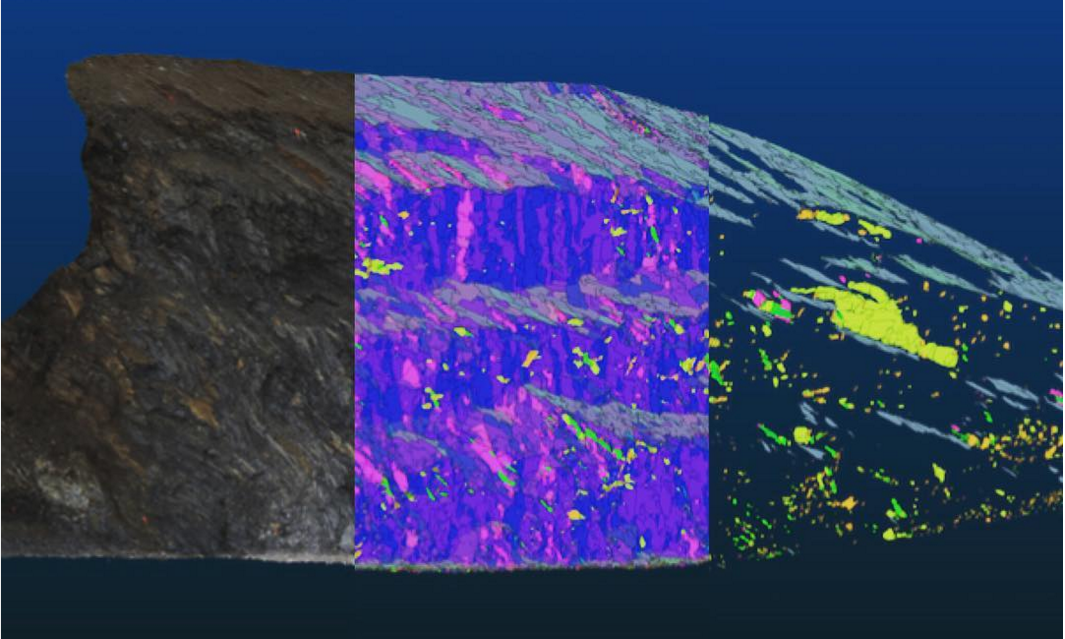

The easiest is to use one of these providers, but we can also define our own provider, using the above pattern for the URL. For geological data, the Macrostrat project is a great resource, especially because they have a tileserver supplying some detail. Their tileserver can be added using

geology_tiles = 'https://tiles.macrostrat.org/carto/{z}/{x}/{y}.png'We also need a place to map. Contextily has a geocoder that can return the tiles covering a given location. It uses OpenStreetMap, so anything that is present there is useable as a location. This includes countries (e.g. 'Paraguay'), provinces/states ('Nova Scotia'), cities ('Lubumbashi'), and so on.

We will use Nova Scotia as our area of interest, as well as giving our desired map tiles. We can also use .plot() on the Place object to get a look at it immediately, using that basemap.

ctx.Place('Nova Scotia', source=ctx.providers.CartoDB.Positron).plot()

The Positron style from Carto for Nova Scotia.

We'll use a different basemap though:

basemap = ctx.providers.Stamen.TonerWe can create the Place with our desired source — geology_tiles in this case — and then plot this on the basemap with some transparency. We will also add an attribution, since we need to credit MacroStrat.

place = ctx.Place('Nova Scotia', source=geology_tiles) base_ax = place.plot() ctx.add_basemap(ax=base_ax, source=basemap, alpha=0.5) text = basemap.attribution + ' | Geological data: MacroStrat.org (CC-BY)' ctx.add_attribution(ax=base_ax, text=text)

Finally, after a plt.show() call, we get the following:

Obviously this is still missing some important things, like a proper legend, but as a quick overview of what we can expect in a given area, it is a good approach. This workflow is probably better suited for general location maps.

Contextily also plays well with geopandas, allowing for an easy locality map of a given GeoDataFrame. Check out the accompanying Notebook for an example.

Except where noted, this content is licensed

Except where noted, this content is licensed