- Some humans are scientists.

- No non-humans are scientists.

- Therefore, scientists are human.

That's how scientists think, right? Logical, deductive, objective, algorithmic. Put in such stark terms, this may seem over the top, but I think scientists do secretly think of themselves this way. Our skepticism makes us immune to the fanciful, emotional, naïvetés that normal people believe. You can't fool a scientist!

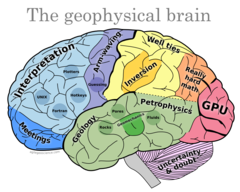

Except of course you can. Just like everyone else, scientists' intuition is flawed, infested with bias like subjectivity and the irresistible need to seek confirmation of hypotheses. I say 'everyone', but perhaps scientists are biased in obscure, profound ways that non-specialists are not. A scary thought.

But sometimes I hear scientists say things that are especially subtle in their wrongness. Don't get me wrong: I wholeheartedly believe these things too, until I stop for a moment and reflect. Here are some examples:

The scientific method

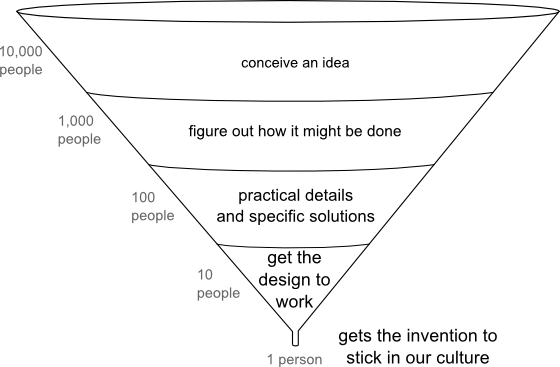

...as if there is but one method. To see how wrong this notion is, stop and try to write down how your own investigations proceed. The usual recipe is something like: question, hypothesis, experiment, adjust hypothesis, iterate, and conclude with a new theory. Now look at your list and ask yourself if that's really how it goes. If it isn't really full of false leads, failed experiments, random shots in the dark and a brain fart or two. Or maybe that's just me.

If not thesis then antithesis

...as if there is no nuance or uncertainty in the world. We treat bipolar disorder in people, but seem to tolerate it and even promote it in society. Arguments quickly move to the extremes, becoming ludicrously over-simplified in the process. Example: we need to have an even-tempered, fact-based discussion about our exploitation of oil and gas, especially in places like the oil sands. This discussion is difficult to have because if you're not with 'em, you're against 'em.

Nature follows laws

...as if nature is just a good citizen of science. Without wanting to fall into the abyss of epistemology here, I think it's important to know at all times that scientists are trying to describe and represent nature. Thinking that nature is following the laws that we derive on this quest seems to me to encourage an unrealistically deterministic view of the world, and smacks of hubris.

How vivid is the claret, pressing its existence into the consciousness that watches it! If our small minds, for some convenience, divide this glass of wine, this universe, into parts — physics, biology, geology, astronomy, psychology, and so on — remember that Nature does not know it!

Richard Feynman

Science is true

...as if knowledge consists of static and fundamental facts. It's that hubris again: our diamond-hard logic and 1024-node clusters are exposing true reality. A good argument with a pseudoscientist always convinces me of this. But it's rubbish—science isn't true. It's probably about right. It works most of the time. It's directionally true, and that's the way you want to be going. Just don't think there's a True Pole at the end of your journey.

There are probably more but I read or hear and example of at least one of these a week. I think these fallacies are a class of cognitive bias peculiar to scientists. A kind of over-endowment of truth. Or perhaps they are examples of a rich medley of biases, each of us with our own recipe. Once you know your recipe and learned its smell, be on your guard!

Your employer owns your products. They pay you for concerted effort on things they need, and to have their socks knocked off occasionally. But they don't own your creativity, judgment, insight, and ideas — the things that make you a professional. They own their data, and their tools, and their processes, but they don't own the people or the intellects that created them. And they can't — or shouldn't be able to — stop you from going out into the world and being an active, engaged professional, free to exerise and discuss our science with whomever you like.

Your employer owns your products. They pay you for concerted effort on things they need, and to have their socks knocked off occasionally. But they don't own your creativity, judgment, insight, and ideas — the things that make you a professional. They own their data, and their tools, and their processes, but they don't own the people or the intellects that created them. And they can't — or shouldn't be able to — stop you from going out into the world and being an active, engaged professional, free to exerise and discuss our science with whomever you like.

Except where noted, this content is licensed

Except where noted, this content is licensed