Test driven development geoscience

/Sometimes I wonder how much of what we do in applied geoscience is really science. Is it really about objective enquiry? Do we form hypotheses, then test them? The scientific method is largely a caricature — science is more accidental and more fun than a step-by-step recipe — but I think our field sometimes falls short of even basic rigour. Go and sit through a conference session on seismic attribute analysis some time and you'll see what I mean. Let's just say there's a lot of arm-waving and shape-ology.

Learning from geeks

We've written before about the virtues of the software engineering community. Innovation has been so rapid recently, that I think it's a great place to find interpretation hacks like pair picking. Learning about and experiencing the amazing productivity of programmers is one of the reasons I think all scientists should learn to program (but not learn to be a programmer). You'll find out about concepts like version control, user-centered design, and test-driven development. Programmers embrace these ideas to a greater or lesser degree, depending on their goals and those of the project they're working on. But all programmers know them.

I'm especially into test-driven development at the moment. The idea is that before implementing a new module or feature, you write a test — a short program that gives the new thing some input, inspects the output, and compares it to a known answer. The first version of the code will likely fail the test. The idea is to refactor the code until it passes the test. Then you add that test to a suite that runs every time you build anything in the same project, so you know your new thing doesn't get broken by something else later. And you aren't tempted to implement features that weren't part of the test.

Fail — Refactor — Pass

Imagine test-driven development geology (or any other kind of geoscience). What would that look like?

- When planning wells, we often do write tests — they're called prognoses. But the comparison with the result is rarely formalized or quantified, especially outside the target zone. Once the well is drilled, it becomes data and we move on. No-one likes to dwell on the poorly understood or error-prone, but naturally that's where the greatest room for improvement is.

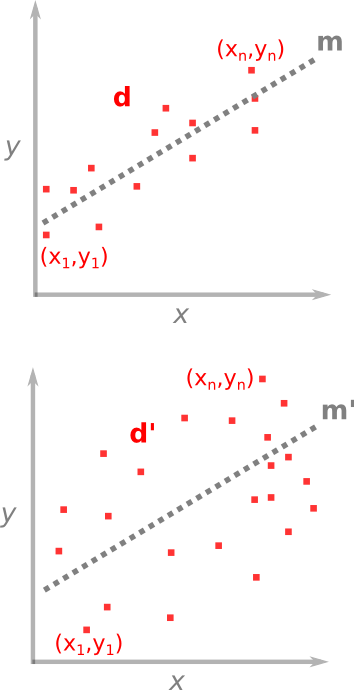

- When designing a new seismic attribute, or embarking on a seismic processing project, we often have a vague idea of success in our heads, and that's about it. What if we explicitly defined an input test dataset, some wells or bits of wells, and set 'passing' performance criteria on those? "I won't interpret the reprocessed seismic until it improves those synthetic correlation coefficients by 40%."

- When designing a seismic survey, we could establish acceptable criteria for trace density, minimum offset, azimuth distribution, and recording time, then use these as a cost function to find the best possible survey for our dollars. Wait, perhaps we actually do this one. Is seismic acquisition unusually scientific? Or is it an inherently more linear problem?

What do you think? Can you see ways to define 'success' before you begin, then somewhat quantitatively compare your results with that? Ideas wanted!

Except where noted, this content is licensed

Except where noted, this content is licensed