A stupid seismic model from core

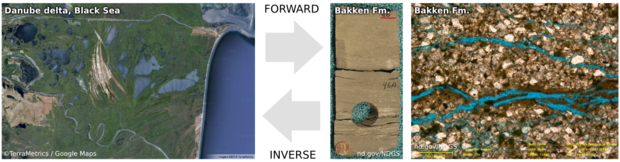

/On the plane back from Calgary, I got an itch to do some image processing on some photographs I took of the wonderful rocks on display at the core convention. Almost inadvertently, I composed a sequence of image filters that imitates a seismic response. And I came to these questions:

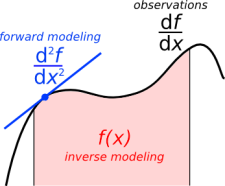

- Is this a viable way to do forward modeling?

- Can we exploit scale invariance to make more accurate forward models?

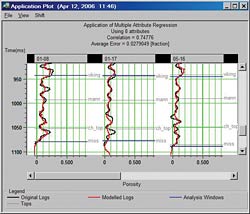

- Can we take the fabric from core and put it in a reservoir model?

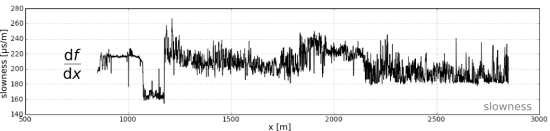

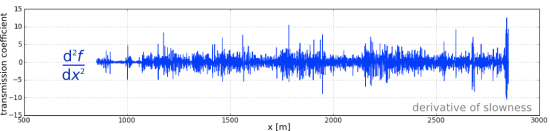

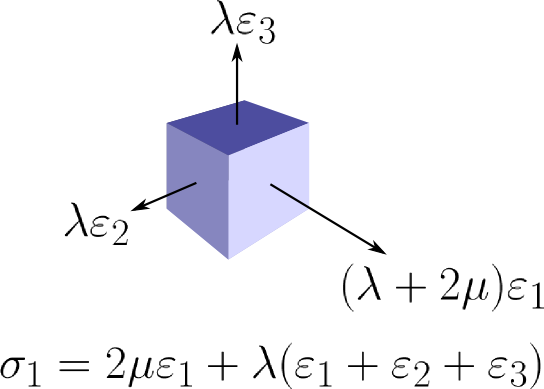

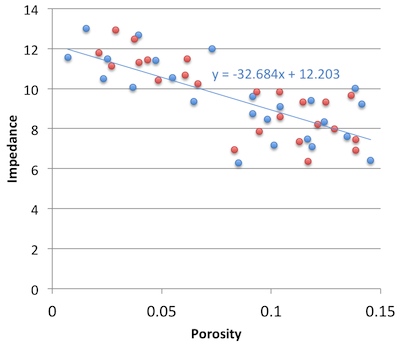

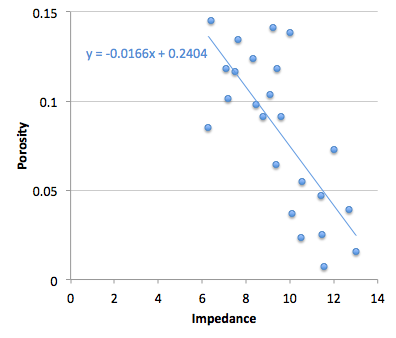

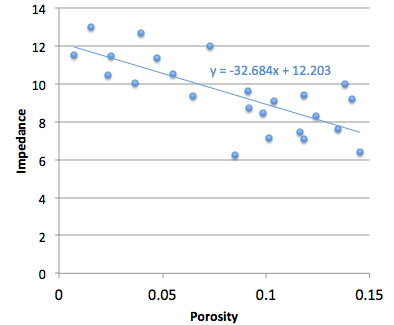

- What is the goodness of fit between colour and impedance?

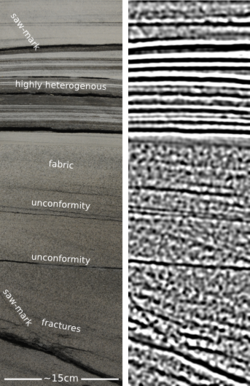

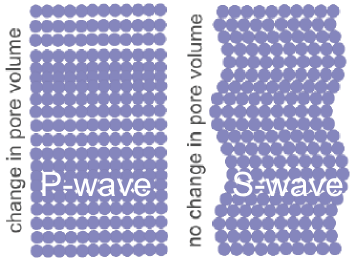

Click to enlargeAbove all, this image processing excerise shows an unambiguous demonstration of the effects of bandwidth. The most important point, no noise has been added. Here is the sequence, it is three steps: convert to grayscale, compute Laplacian, apply bandpass filter. This is analgous to the convolution of a seismic wavelet and the earth's reflectivity. Strictly speaking, it would be more physically sound to make a forward model using wavelet convolution (simple) or finite difference simulation (less simple), but that level of rigour was beyond the scope of my tinkering.

Click to enlargeAbove all, this image processing excerise shows an unambiguous demonstration of the effects of bandwidth. The most important point, no noise has been added. Here is the sequence, it is three steps: convert to grayscale, compute Laplacian, apply bandpass filter. This is analgous to the convolution of a seismic wavelet and the earth's reflectivity. Strictly speaking, it would be more physically sound to make a forward model using wavelet convolution (simple) or finite difference simulation (less simple), but that level of rigour was beyond the scope of my tinkering.

The two panels help illustrate a few points. First, finely layered heterogeneous stratal packages coalesce into crude seismic events. This is the effect of reducing bandwidth. Second, we can probably argue about what is 'signal' and what is 'noise'. However, there is no noise, per se, just geology that looks noisy. What may be mistaken as noise, might in fact be bonafide interfaces within material properties.

If the geometry of geology is largely scale invariant, perhaps, just perhaps, images like these can be used at the basin and reservoir scale. I really like the look of the crumbly fractures near the bottom of the image. This type of feature could drive the placement of a borehole, and the production in a well. The patches, speckles, and bands in the image are genuine features of the geology, not issues of quality or noise.

Do you think there is a role for transforming photographs of rocks into seismic objects?

Except where noted, this content is licensed

Except where noted, this content is licensed