A new blog, and a new course

/There's a great new geoscience blog on the Internet — I urge you to add it to your blog-reading app or news reader or list of links or whatever it is you use to keep track of these things. It's called Geology and Python, and it contains exactly what you'd expect it to contain!

The author, Bruno Ruas de Pinho, has nine posts up so far, all excellent. The range of topics is quite broad:

- Calculating the Hoek–Brown failure criterion

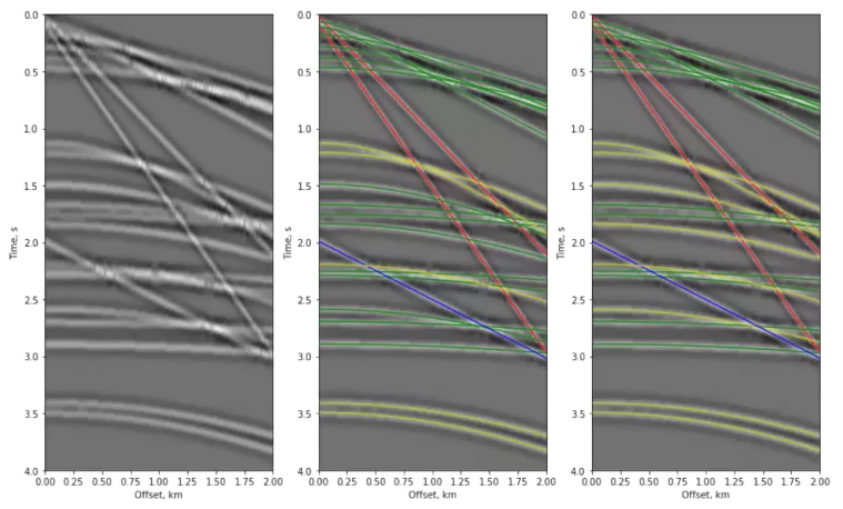

- Interpolation with machine learning (right)

- Stereonets and rose diagrams

In each post, Bruno takes some geoscience challenge — nothing too huge, but the problems aren't trivial either — and then methodically steps through solving the problem in Python. He's clearly got a good quantitative brain, having recently graduated in geological engineering from the Federal University of Pelotas, aka UFPel, Brazil, and he is now available for hire. (He seems to be pretty sharp, so if you're doing anything with computers and geoscience, you should snag him.)

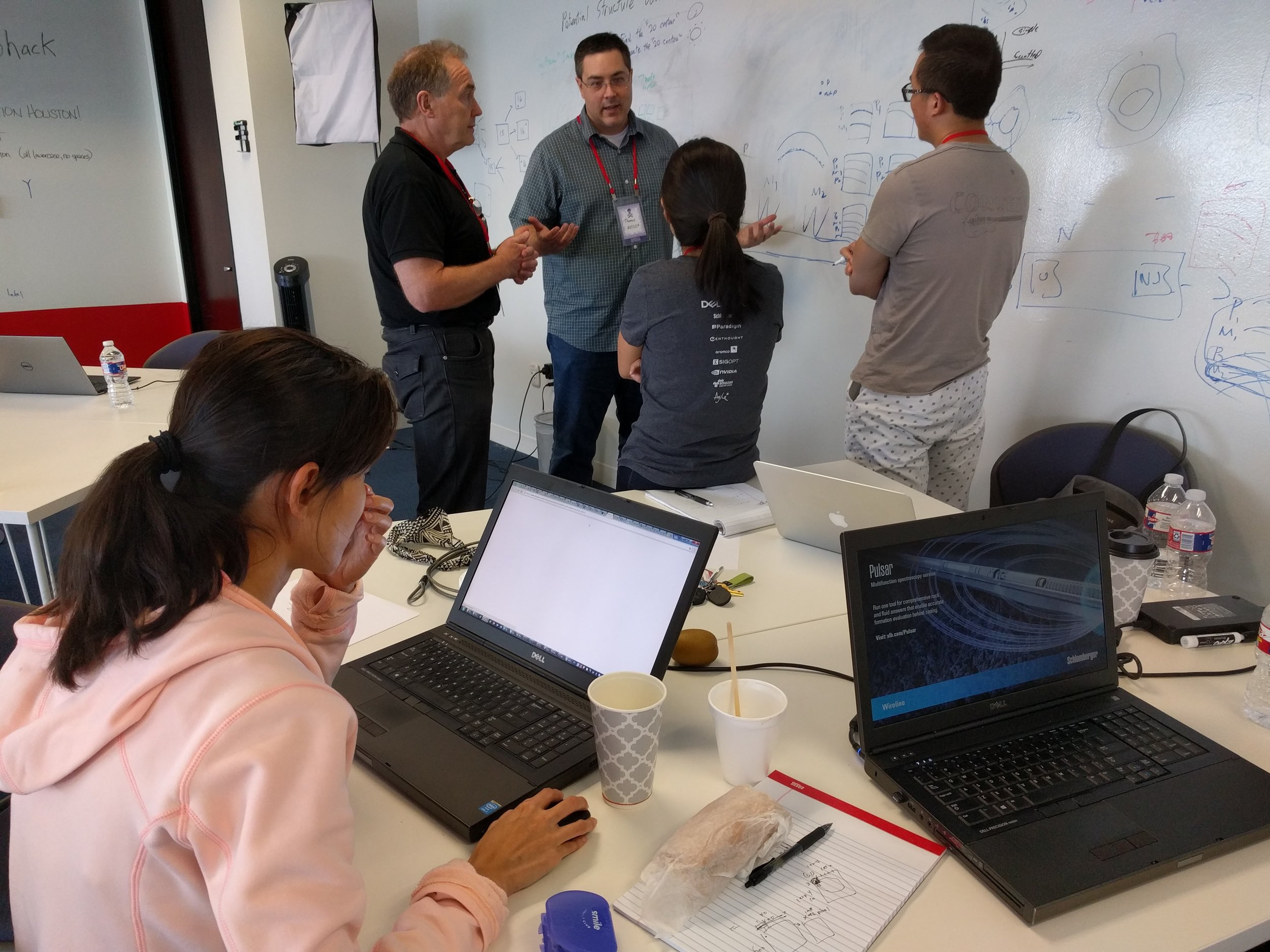

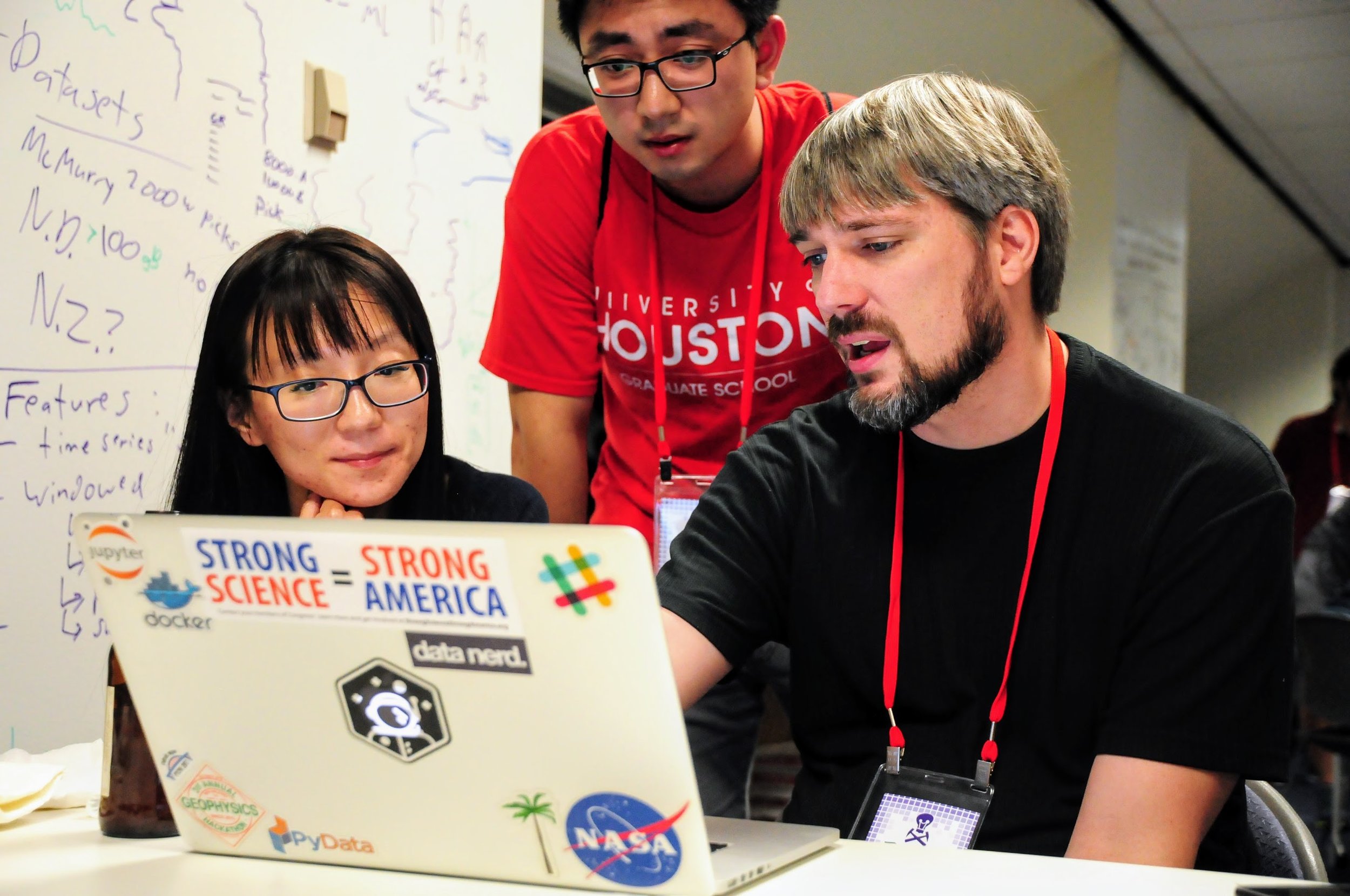

A new course for Calgary

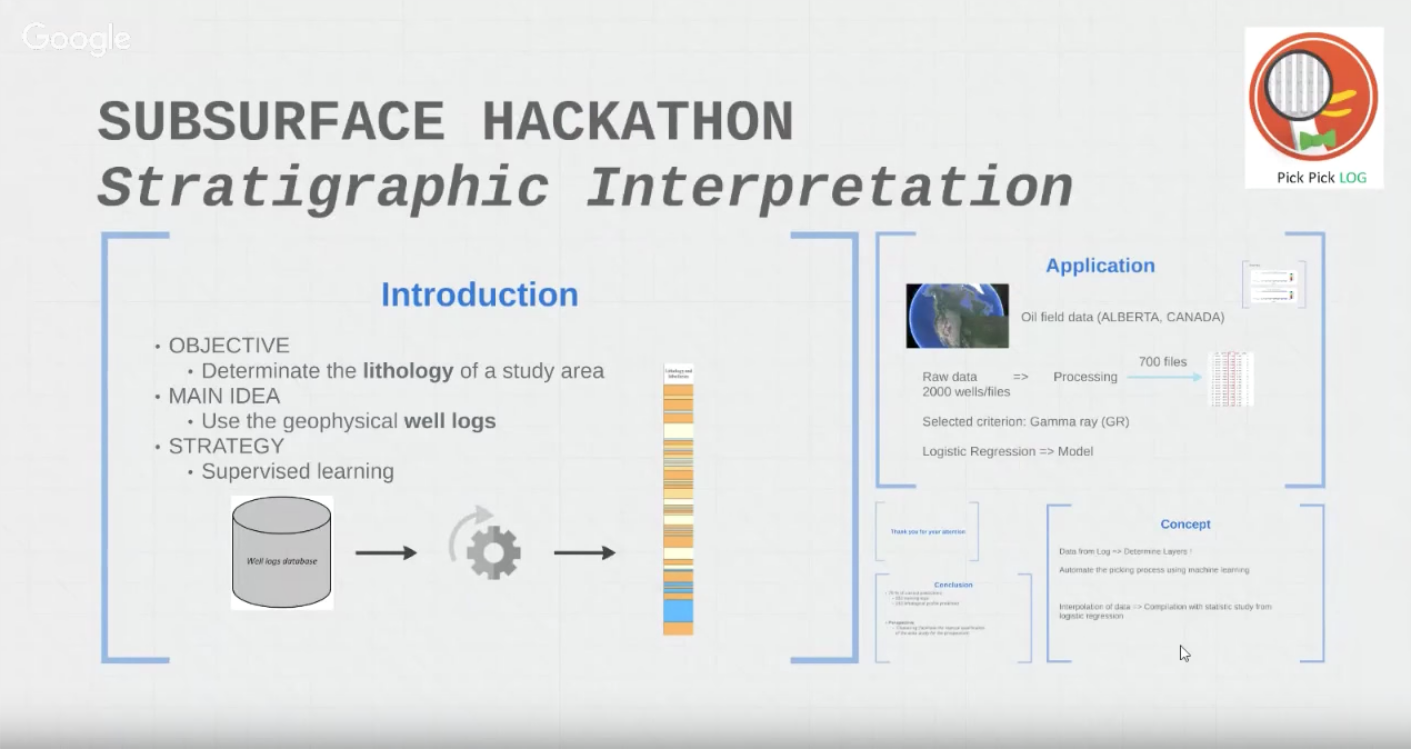

We've run lots of Introduction to Python courses before, usually with the name Creative Geocomputing. Now we're adding a new dimension, combining a crash introduction to Python with a crash introduction to machine learning. It's ambitious, for sure, but the idea is not to turn you into a programmer. We aim to:

- Help you set up your computer to run Python, virtual environments, and Jupyter Notebooks.

- Get you started with downloading and running other people's packages and notebooks.

- Verse you in the basics of Python and machine learning so you can start to explore.

- Set you off with ideas and things to figure out for that pet project you've always wanted to code up.

- Introduce you to other Calgarians who love playing with code and rocks.

We do all this wielding geoscientific data — it's all well logs and maps and seismic data. There are no silly examples, and we don't shy away from so-called advanced things — what's the point in computers if you can't do some things that are really, really hard to do in your head?

Tickets are on sale now at Eventbrite, it's $750 for 2 days — including all the lunch and code you can eat.

Except where noted, this content is licensed

Except where noted, this content is licensed