What is scientific computing?

/I started my career in sequence stratigraphy, so I know a futile discussion about semantics when I see one. But humour me for a second.

As you may know, we offer a multi-day course on 'geocomputing'. Somebody just asked me: what is this mysterious, made-up-sounding discipline? Swiftly followed by: can you really teach people how to do computational geoscience in a few days? And then: can YOU really teach people anything??

Good questions

You can come at the same kind of question from different angles. For example, sometimes professional programmers get jumpy about programming courses and the whole "learn to code" movement. I think the objection is that programming is a profession, like other kinds of engineering, and no-one would dream of offering a 3-day course on, say, dentistry for beginners.

These concerns are valid, sort of.

- No, you can't learn to be a computational scientist in 3 days. But you can make a start. A really good one at that.

- And no, we're not programmers. But we're scientists who get things done with code. And we're here to help.

- And definitely no, we're not trying to teach people to be software engineers. We want to see more computational geoscientists, which is a different thing entirely.

So what's geocomputing then?

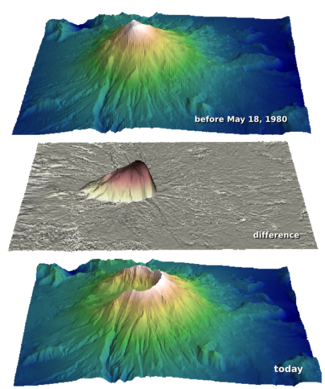

Words seem inadequate for nuanced discussion. Let's instead use the language of ternary diagrams. Here's how I think 'scientific computing' stacks up against 'computer science' and 'software engineering'...

If you think these are confusing, just be glad I didn't go for tetrahedrons.

These are silly, of course. We could argue about them for hours I'm sure. Where would IT fit? ("It's all about the business" or something like that.) Where does Agile fit? (I've caricatured our journey, or tried to.) Where do you fit?

Except where noted, this content is licensed

Except where noted, this content is licensed