What is AVO?

/I used to be a geologist (but I'm OK now). When I first met seismic data, I took the reflections and geometries quite literally. The reflections come from geology, so it seems reasonable to interpret them as geology. But the reflections are waves, and waves are slippery things: they have to travel through kilometres of imperfectly known geology; they can interfere and diffract; they emanate spherically from the source and get much weaker quickly. This section from the Rockall Basin in the east Atlantic shows this attenuation nicely, as well as spectacular echo reflections from the ocean floor called multiples:

Data from the Virtual Seismic Atlas, contributed by the British Geological Survey.

Data from the Virtual Seismic Atlas, contributed by the British Geological Survey.

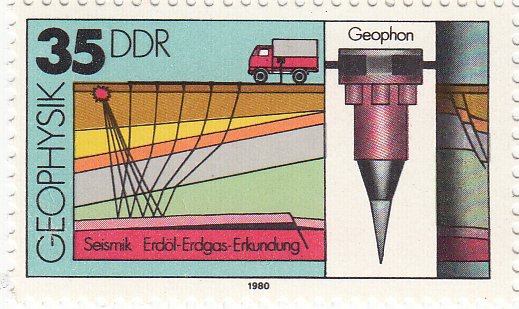

Impedance is the product of density and velocity. Despite the complexity of seismic reflections, all is not lost. Even geologists interpreting seismic know that the strength of seismic reflections can have real, quantitative, geological meaning. For example, amplitude is related to changes in acoustic impedance Z, which is equal to the product of bulk density ρ and P-wave velocity V, itself related to lithology, fluid, and porosity.

Impedance is the product of density and velocity. Despite the complexity of seismic reflections, all is not lost. Even geologists interpreting seismic know that the strength of seismic reflections can have real, quantitative, geological meaning. For example, amplitude is related to changes in acoustic impedance Z, which is equal to the product of bulk density ρ and P-wave velocity V, itself related to lithology, fluid, and porosity.

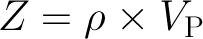

Flawed cartoon of a marine seismic survey. OU, CC-BY-SA-NC.

Flawed cartoon of a marine seismic survey. OU, CC-BY-SA-NC.

But when the amplitude versus offset (AVO) behaviour of seismic reflections gets mentioned, most non-geophysicists switch off. If that's your reaction too, don't be put off by the jargon, it's really not that complicated.

The idea that we collect data from different angles is not complicated or scary. Remember the classic cartoon of a seismic survey (right). It's clear that some of the ray paths bounce off the geological strata at relatively small incidence angles, closer to straight down-and-up. Others, arriving at receivers further away from the source, have greater angles of incidence. The distance between the source and an individual receiver is called offset, and is deducible from the seismic field data because the exact location of the source and receivers is always known.

The basic physics behind AVO analysis is that the strength of a reflection does not only depend on the acoustic impedance—it also depends on the angle of incidence. Only when this angle is 0 (a vertical, or zero-offset, ray) does the simple relationship above hold.

Total internal reflection underwater. Source: Mbz1 via Wikimedia Commons.Though it may be unintuitive at first, angle-dependent reflectivity is an idea we all know well. Imagine an ordinary glass window: you can see through it perfectly well when you look straight through it, but when you move to a wide angle it suddenly becomes very reflective (at the so-called critical angle). The interface between water and air is similarly reflective at wide angles, as in this underwater view.

Total internal reflection underwater. Source: Mbz1 via Wikimedia Commons.Though it may be unintuitive at first, angle-dependent reflectivity is an idea we all know well. Imagine an ordinary glass window: you can see through it perfectly well when you look straight through it, but when you move to a wide angle it suddenly becomes very reflective (at the so-called critical angle). The interface between water and air is similarly reflective at wide angles, as in this underwater view.

Karl Bernhard Zoeppritz (German, 1881–1908) was the first seismologist to describe the relationship between reflectivity and angle of incidence. In this context, describe means write down the equations for. Not two or three equations, lots of equations.

The Zoeppritz equations are very good model for how seismic waves propagate in the earth. There are some unnatural assumptions about isotropy, total isolation of the interface, and other things, but they work well in many real situations. The problem is that the equations are unwieldy, especially if you are starting from seismic data and trying to extract rock properties—trying to solve the so-called inverse problem. Since we want to be able to do useful things quickly, and since seismic data are inherently approximate anyway, several geophysicists have devised much friendlier models of reflectivity with offset.

I'll take a look at these more friendly models next time, because I want to tell a bit about how we've implemented them in our soon-to-be-released mobile app, AVO*. No equations, I promise! Well, one or two...

Except where noted, this content is licensed

Except where noted, this content is licensed