Great geophysicists #6: Robert Hooke

/

Hooke's famous law tells us how things deform and, along with Newton, Hooke is thus a parent of the wave equation. The derivation starts by equating the force due to acceleration (of a vibrating particle, say), and the force due to elastic deformation:

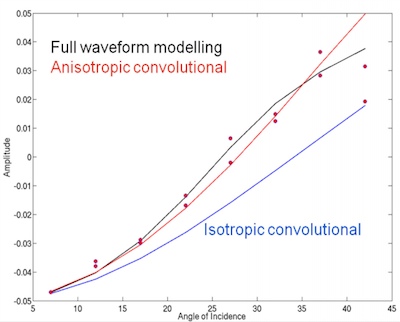

where m is mass, x is displacement, the two dots denote the second derivative with respect to time (a.k.a. acceleration), and k is the spring constant. This powerful insight, which allows us to compute a particle's motion at a given time, was first made by d'Alembert in about 1742. It is the founding principle of seismic rock physics.

Hooke the geologist

Hooke the geologist

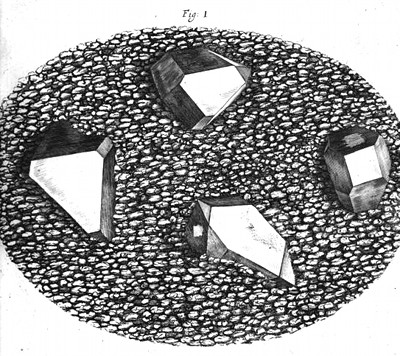

Like most scientists of the 17th century, Hooke was no specialist. One of his best known works was Micrographia, first published in 1665. The microscope was invented in the late 1500s, but Hooke was one of the first people to meticulously document and beautifully draw his observations. His book was a smash hit by all accounts, inspiring wonder in everyone who read it (Samuel Pepys, for example). Among other things, Hooke described samples of petrified wood, forams, ammonites, and crystals of quartz in a flint nodule (left). Hooke also wrote about the chalk formations in the cliffs near his home town.

Hooke went on to help Wren rebuild London after the great fire of 1666, and achieved great respect for this work too. So esteemed is he that Newton was apparently rather jealous of him, and one historian has referred to him as 'England's Leonardo'. He never married, and lived in his Oxford college all his adult life, and is buried in Bishopsgate, London. As one of the fathers of geophysics, we salute him.

The painting of Hooke, by Rita Greer, is licensed under a Free Art License. It's a interpretation based on descriptions of him ("his chin sharp, and forehead large"); amazingly, there are no known contemporary images of him. Hear more about this.

The painting of Hooke, by Rita Greer, is licensed under a Free Art License. It's a interpretation based on descriptions of him ("his chin sharp, and forehead large"); amazingly, there are no known contemporary images of him. Hear more about this.

You can read more about the relationship between Hooke's law and seismic waves in Bill Goodway's and Evan's chapters in 52 Things You Should Know About Geophysics. Download their chapters for free!

Except where noted, this content is licensed

Except where noted, this content is licensed