A focus on building

/We've got some big plans for modelr.io, our online forward modeling tool. They're so big, we're hiring! An exhilarating step for a small company. If you are handy with the JavaScript, or know someone who is, scroll down to read all about it!

Here are some of the cool things in Modelr's roadmap:

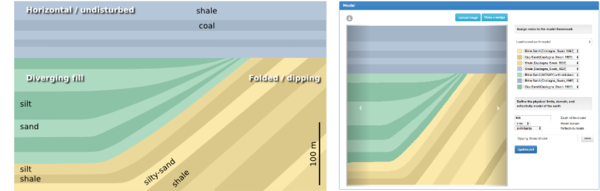

Interactive 1D models – to support fluid substitution, we need to handle physical properties of pore fluids as well as rocks. Our prototype (right) supports arbitrary layers, but eventually we'd like to allow uploading well logs too.

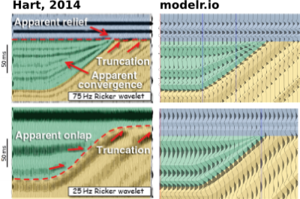

Exporting models – imagine creating an earth model of your would-be prospect, and sending it around to your asset team to strengthen it's prognosis. Modelr solves the forward problem, PickThis solves the inverse. We need to link them up. We also need SEG-Y export, so you can see your model next to your real data.

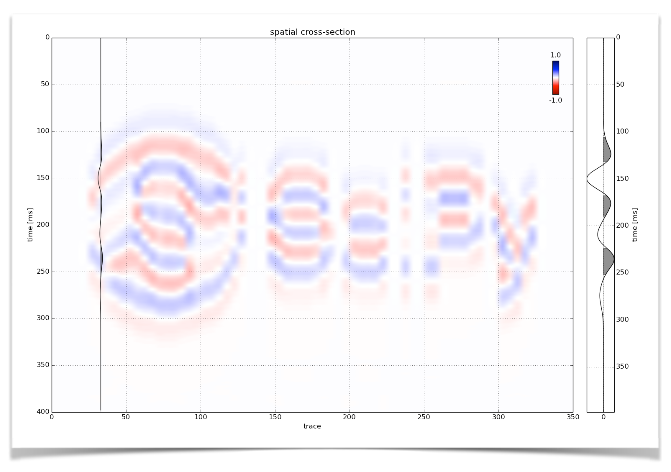

Models from sketches – Want to do a quick sketch of a geologic setting, and see what it would look like under the lens of seismic? At the hackathon last month, Matteo Niccoli and friends showed a path to this dream — sketch a picture, take a photo, and upload it to the the app with your phone (right).

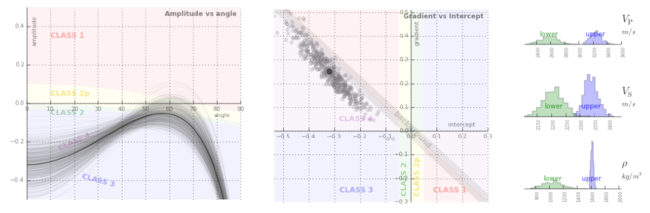

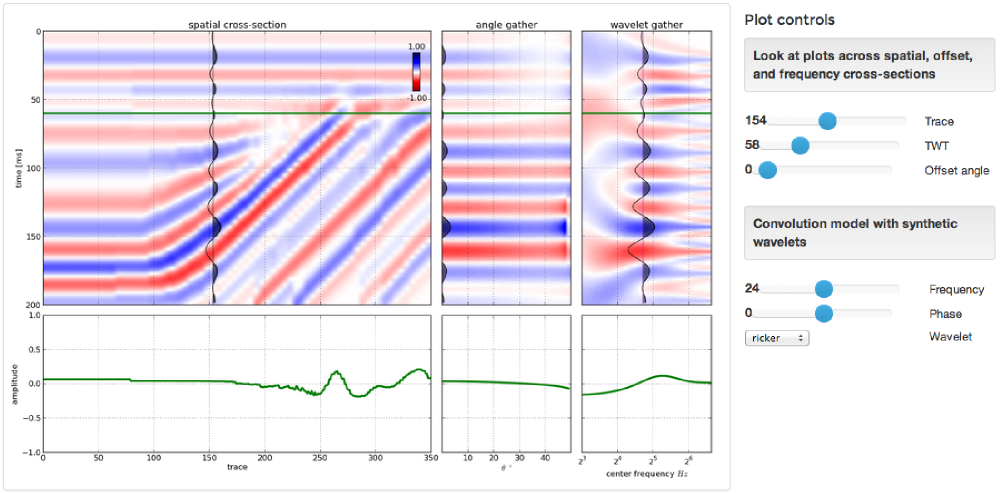

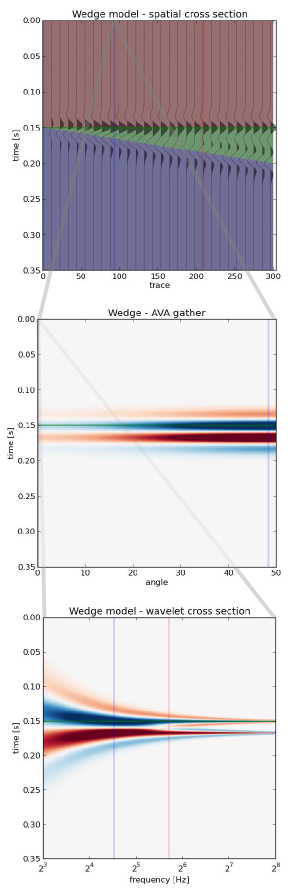

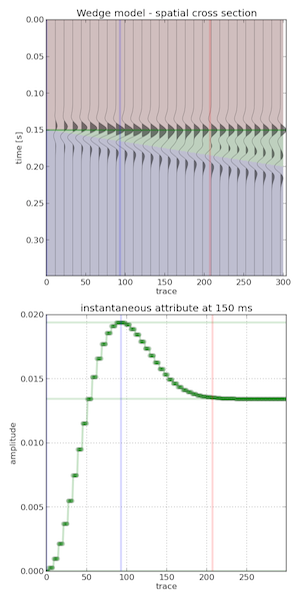

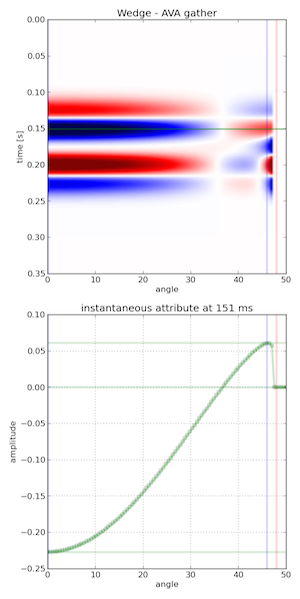

3D models – Want to visualize how seismic amplitudes vary according to bed thickness? Build a 2D wedge model and you can analyze a tuning curve. Now, want to explore the same wedge spanning a range of physical properties? That's a job for a 3D wedge model.

Seismic attributes – Seismic discontinuity attributes, like continuity, or curvature can be ineffective when viewed in cross-section; they're really meant to be shown in time-slices. There is a vast library of attributes and co-rendering technologies we want to provide.

If you get excited about building simple tools on the web for difficult tasks under the ground, we'd love to talk to you. We have an open position for a full-time web developer to help us carry this project forward. Check out the job posting.

Except where noted, this content is licensed

Except where noted, this content is licensed