Machine learning meets seismic interpretation

/Agile has been reverberating inside the machine learning echo chamber this past week at EAGE. The hackathon's theme was machine learning, Monday's workshop was all about machine learning. And Matt was also supposed to be co-chairing the session on Applications of machine learning for seismic interpretation with Victor Aare of Schlumberger, but thanks to a power-cut and subsequent rescheduling, he found himself double-booked so, lucky me, he invited me to sit in his stead. Here are my highlights, from the best seat in the house.

Before I begin, I must mention the ambivalence I feel towards the fact that 5 of the 7 talks featured the open-access F3 dataset. A round of applause is certainly due to dGB Earth Sciences for their long time stewardship of open data. On the other hand, in the sardonic words of my co-chair Victor Aarre, it would have been quite valid if the session was renamed The F3 machine learning session. Is it really the only quality attribute research dataset our industry can muster? Let's do better.

Using seismic texture attributes for salt classification

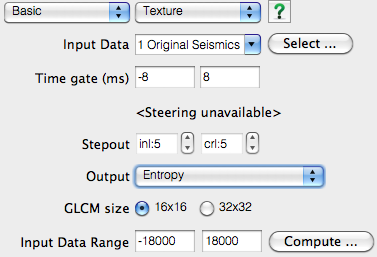

Ghassan AlRegib ruled the stage throughout the session with not one, not two, but three great talks on behalf of himself and his grad students at Georgia Institute of Technology (rather than being a show of bravado, this was a result of problems with visas). He showed some exciting developments in shallow learning methods for predicting facies in seismic data. In addition to GLCM attributes, he also introduced a couple of new (to me anyway) attributes for salt classification. Namely, textural gradient and a thing he called seismic saliency, a metric modeled after the human visual system describing the 'reaction' between relative objects in a 3D scene.

Twelve Seismic attributes used for multi-attribute salt-boundary classification. (a) is RMS Amplitude, (B) to (M) are TEXTURAL attributes. See abstract for details. This figure is copyright of Ghassan AlRegib and licensed CC-BY-SA by virtue of being generated from the F3 dataset of dGB and TNO.

Ghassan also won the speakers' lottery, in a way. Due to the previous day's power outage and subsequent reshuffle, the next speaker in the schedule was a no-show. As a result, Ghassan had an extra 20 minutes to answer questions. Now for most speakers that would be a public-speaking nightmare, but Ghassan hosted the onslaught of inquiring minds beautifully. If we hadn't had to move on to the next next talk, I'm sure he could have entertained questions all afternoon. I find it fascinating how unpredictable events like power outages can actually create the conditions for really effective engagement.

Salt classification without using attributes (using deep learning)

Matt reported on Anders Waldeland's work a year ago, and it was interesting to see how his research has progressed, as he nears the completion of his thesis.

Anders successfully demonstrated how convolutional neural networks (CNNs) can classify salt bodies in seismic datasets. So, is this a big deal? I think it is. Indeed, Anders's work seems like a breakthough in seismic interpretation, at least of salt bodies. To be clear, I don't think this means that it is time for seismic interpreters to pack up and go home. But maybe we can start looking forward to spending our time doing less tedious things than picking complex salt bodies.

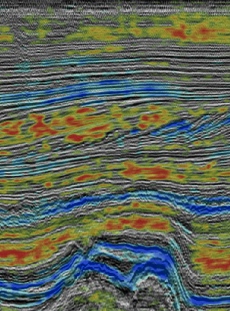

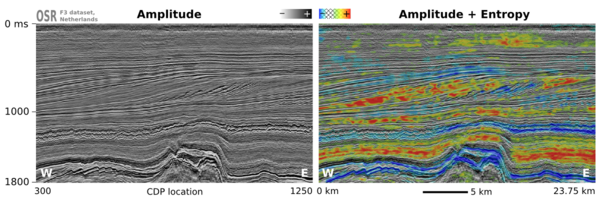

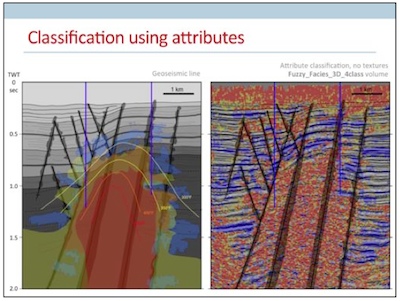

He trained a CNN on one manually labeled slice of a 3D cube and used the network to automatically classify the full 3D salt body (on the right in the figure). Conventional algorithms for salt picking, such as that used by AlRegib (see above), typically rely on seismic attributes to define a feature space. This requires professional insight and judgment, and is prone to error and bias. Nicolas Audebert mentioned the same shortcoming in his talk in the workshop Matt wrote about last week. In contrast, the CNN algorithm works directly on the seismic data, learning the most discriminative filters on its own, no attributes needed.

Intuition training

Machine learning isn't just useful for computing in the inverse direction such as with inversion, seismic interpretation, and so on. Johannes Amtmann showed us how machine learning can be useful for ranking the performance of different clustering methods using forward models. It was exciting to see: we need to get back into the habit of forward modeling, each and every one of us. Interpreters build synthetics to hone their seismic intuition. It's time to get insanely good at building forward models for machines, to help them hone theirs.

There were so many fascinating problems being worked on in this session. It was one of the best half-day sessions of technical content I've ever witnessed at a subsurface conference. Thanks and well done to everyone who presented.

References

- H Di, M Shafiq and G AlRegib (2017). Multi-attribute K-means Cluster Analysis for Salt Boundary Detection.

- H Di and G AlRegib (2017). Seismic Multi-attribute Classification for Salt Boundary Detection - A Comparison.

- Y Alaudah and G AlRegib, (2017). A Weakly-Supervised Approach to Seismic Structure Labeling.

- A Waldeland and A Solberg (2017). Salt Classification Using Deep Learning.

- J Amtmann et al. (2017). Testing of Clustering Algorithms on Different 3D Seismic Models.

Except where noted, this content is licensed

Except where noted, this content is licensed