Openness is a two-way street

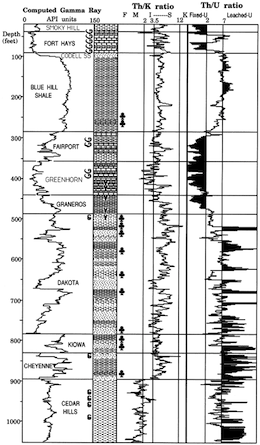

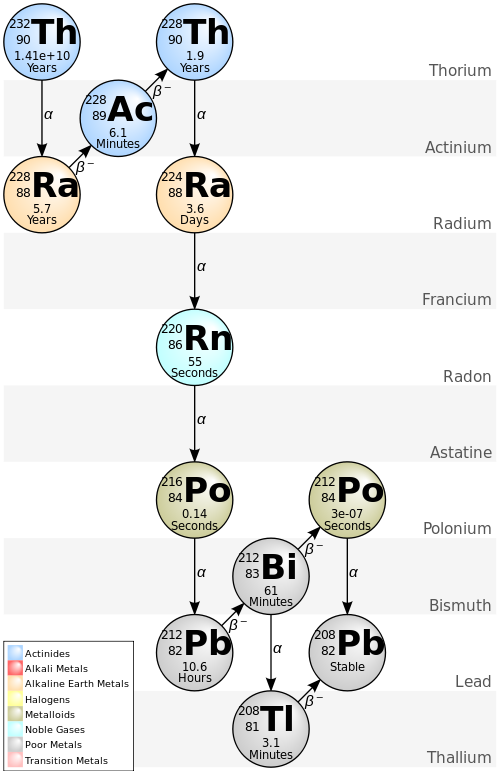

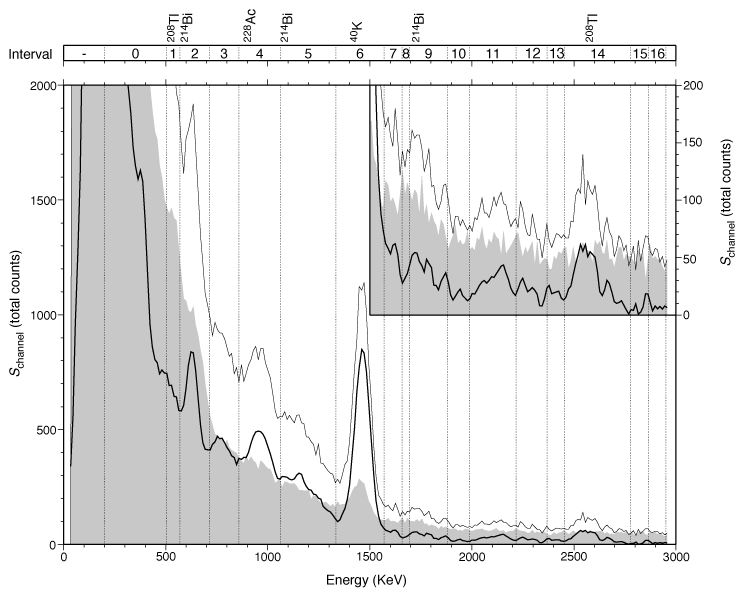

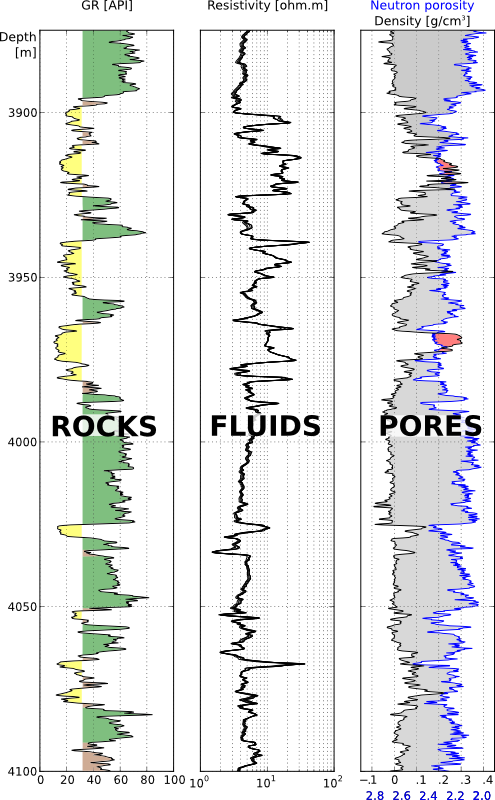

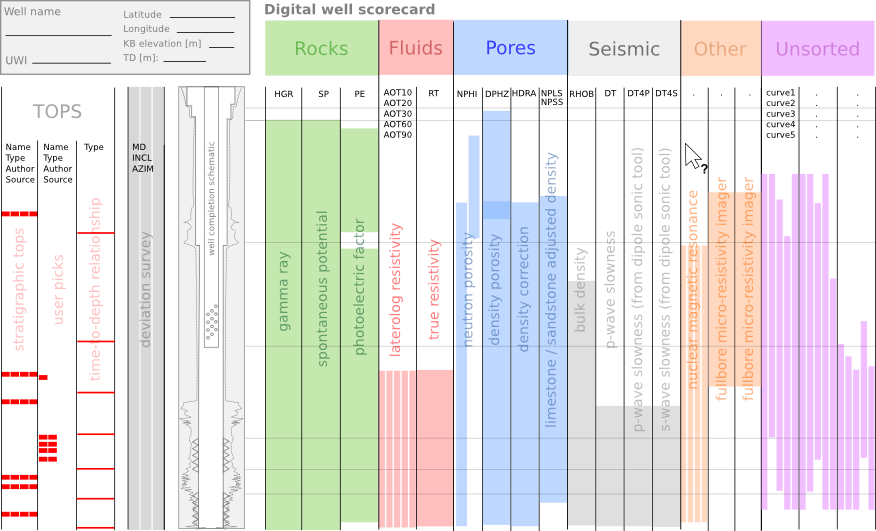

/Last week the Data Analysis Study Group of the SPE Gulf Coast Section announced a new machine learning contest (I’m afraid registration is now closed, even though the contest has not started yet). The task is to predict shear-wave sonic from other logs, similar to the SPWLA PDDA contest last year. This is a valuable problem in the subsurface, because shear sonic log is essential for computing elastic properties of rocks and therefore in predicting rock and fluid properties or processing seismic. Indeed, TGS have built a business on predicted logs with their ARLAS product. There’s money in log prediction!

The task looks great, but there’s one big problem: the dataset is not open.

Why is this a problem?

Before answering that, let’s look at some context.

What’s a machine learning contest?

Good question. Typically, an organization releases a dataset (financial timeseries, Netflix viewer data, medical images, or whatever). They invite people to predict some valuable property (when to sell, which show to recommend, how to treat the illness, or whatever). And they pick the best, measured against known labels on a hidden dataset.

Kaggle is one of the largest platforms hosting such challenges, and they often attract thousands of participants — competing for large prizes. TGS ran a seismic salt-picking contest on the platform, attracting almost 74,000 submissions from 3220 teams with a $100k prize purse. Other contests are more grass-roots, like the one I ran with Brendon Hall in 2016 on lithology prediction, and like this SPE contest. It’s being run by a team of enthusiasts without a lot of resources from SPE, and the prize purse is only $1000 — representing about 3 hours of the fully loaded G&A of an oil industry professional.

What has this got to do with reproducibility?

Contests that award a large prize in return for solving a hard problem are essentially just a kind of RFP-combined-with-consulting-job. It’s brutally inefficient: hundreds or even thousands of people spend hours on the problem for free, and a handful are financially rewarded. These contests attract a lot of attention, but I’m not that interested in them.

Community-oriented events like this SPE contest — and the recent FORCE one that Xeek hosted — are more interesting and I believe they are more impactful. They have lots of great outcomes:

Lots of people have fun working on a hard problem and connecting with each other.

Solutions are often shared after, or even during, the contest, so that everyone learns and grows their toolbox.

A new open dataset that might even become a much-needed benchmark for the task in hand.

Researchers can publish what they did, or do later. (The SEG ML contest tutorial and results article have 136 citations between them, largely from people revisiting the dataset to show new solutions.)

A lot of new open-source machine learning code is always exciting, but if the data is not open then the work is by definition not reproducible. It seems especially unfair — cheeky, even — to ask participants to open-source their code, but to keep the data proprietary. For sure TGS is interested in how these free solutions compare to their own product.

Well, life’s not fair. Why is this a problem?

The data is being shared with the contest participants on the condition that they may not share it. In other words it’s proprietary. That means:

Participants are encumbered with the liability of a proprietary dataset. Sure, TGS is sharing this data in good faith today, but who knows how future TGS lawyers will see it after someone accidentally commits it to their GitHub repo? TGS is a billion-dollar company, they will win a legal argument with you. (Having said that, there’s no NDA or anything, just a checkbox in a form. I don’t know how binding it really is… but I don’t want to be the one that finds out.)

Participants can’t publish reproducible papers on their own work. They can publish classic oil-indsutry, non-reproducible work — I did this thing but no-one can check it because I can’t give you the data — but do we really need more of that? (In the contest introductory Zoom, someone asked about publishing plots of the data. The answer: “It should be fine.” Are we really still this naive about data?)

If anyone from TGS is reading this and thinking, “Come on, we’re not going to sue anyone — we’re not GSI! — it’s fine :)” then my response is: Wonderful! In that case, why not just formalize everything by releasing the data under an open licence — preferably Creative Commons Attribution 4.0? (Unmodified! Don’t make the licensing mistakes that Equinor and NAM have made recently.) That way, everyone knows their rights, everyone can safely download the data, and the community can advance. And TGS looks pretty great for contributing an awesome dataset to the subsurface machine learning community.

I hope TGS decides to release the data with an open licence. If they don’t, it feels like a rather one-sided deal to me. And with the arrangement as it stands, there’s no way I would enter this contest.

Except where noted, this content is licensed

Except where noted, this content is licensed