Helpful horizons

/Ah, the smell of a new seismic interpretation project. All those traces, all that geology — perhaps unseen by humans or indeed any multicellular organism at all since the Triassic. The temptation is to Just Start Interpreting, why, you could have a map by lunchtime tomorrow! But wait. There are some things to do first.

Once I've made sure all is present and correct (see How to QC a seismic volume), I spend a bit of time making some helpful horizons...

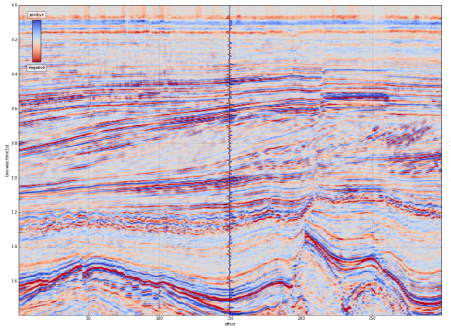

- The surface. One of the fundamental horizons, the seafloor or ground surface is a must-have. You may have received it from the processor (did you ask for it?) or it may be hidden in the SEG-Y headers — ask whoever received or loaded the data. If not, ground elevation is usually easy enough to get from your friendly GIS guru. If you have to interpret the seafloor, at least it should autotrack quite well.

- Seafloor multiple model. In marine data, I always make a seafloor multiple model — just multiply the seafloor pick by 2. This will help you make sense of any anomalous reflectors or amplitudes at that two-way time. Maybe make a 3× version too if things look really bad. Remember, the 2× multiple will be reverse polarity.

- Other multiples. You can model the surface multiple of any strong reflectors with the same arithmetic — but the chances are that any residual multiple energy is quite subtle. You may want to seek help modeling them properly, once you have a 3D velocity model.

A 2D seismic dataset with some of the suggested helpful horizons. Please see the footnote about this dataset. Click the image to enlarge.

- Water depth markers. I like to make flat horizons* at important water depths, eg shelf edge (usually about 100–200 m), plus 1000 m, 2000 m, etc. This mainly helps to keep track of where you are, and also to think about prospectivity, accessibility, well cost, etc. You only want these to exist in the water, so delete them anywhere they are deeper than the seafloor horizon. Your software should have an easy way to implement a simple model for time t in ms, given depth d in m and velocity** V in m/s, e.g.

$$ t = \frac{2000 d}{V} \approx \frac{2000 d}{1490} \qquad \qquad \mathrm{e.g.}\ \frac{2000 \times 1000}{1490} = 1342\ \mathrm{ms} $$

- Hydrate stability zone. In marine data and in the Arctic you may want to model the bottom of the gas hydrate stability zone (GHSZ) to help interpret bottom-simulating reflectors, or BSRs. I usually do this by scanning the literature for reports of BSRs in the area, or data on hydrate encounters in wells. In the figure above, I just used the seafloor plus 400 ms. If you want to try for more precision, Bale et al. (2014) provided several models for computing the position of the GHSZ — thank you to Murray Hoggett at Birmingham for that tip.

- Fold. It's very useful to be able to see seismic fold on a map along with your data, so I like to load fold maps at some strategic depths or, better yet, load the entire fold volume. That way you can check that anomalies (especially semblance) don't have a simple, non-geological explanation.

- Gravity and magnetics. These datasets are often readily available. You will have to shift and scale them to some sensible numbers, either at the top or the bottom of your sections. Gravity can be especially useful for interpreting rifted margins.

- Important boundaries. Your software may display these for you, but if not, you can fake it. Simply make a horizon that only exists within the polygon — a lease boundary perhaps — by interpolating within a polygon. Make this horizon flat and deep (deeper than the seismic), then merge it with a horizon that is flat and shallow (–1 ms, or anything shallower than the seismic). You should end up with almost-vertical lines at the edges of the feature.

- Section headings. I like to organize horizons into groups — stratigraphy, attributes, models, markers, etc. I make empty horizons to act only as headings so I can see at a glance what's going on. Whether you need do this, and how you achieve it, depends on your software.

Most of these horizons don't take long to make, and I promise you'll find uses for them throughout the interpretation project.

If you have other helpful horizon hacks, I'd love to hear about them — put your favourites in the comments.

Footnotes

* It's not always obvious how to make a flat horizon. A quick way is to take some ubiquitous horizon — the seafloor maybe — and multiply it by zero.

** The velocity of sound in seawater is not a simple subject. If you want to be precise about it, you can try this online calculator, or implement the equations yourself.

The 2D seismic dataset shown is from the Laurentian Basin, offshore Newfoundland. The dataset is copyright of Natural Resources Canada, and subject to the Open Government License – Canada. You can download it from the OpendTect Open Seismic Repository. The cultural boundary and gravity data is fictitious — I made them up for the purposes of illustration.

References

Bale, Sean, Tiago M. Alves, Gregory F. Moore (2014). Distribution of gas hydrates on continental margins by means of a mathematical envelope: A method applied to the interpretation of 3D seismic data. Geochem. Geophys. Geosyst. 15, 52–68, doi:10.1002/2013GC004938. Note: the equations are in the Supporting Information.

Except where noted, this content is licensed

Except where noted, this content is licensed